I have storage setup in my server as follows:

gpadmin-local@pve1:~$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 1.8T 0 disk

└─sda1 8:1 0 1.8T 0 part

sdb 8:16 0 3.6T 0 disk

└─sdb1 8:17 0 3.6T 0 part

nvme1n1 259:0 0 111.8G 0 disk

├─nvme1n1p1 259:1 0 1007K 0 part

├─nvme1n1p2 259:2 0 512M 0 part /boot/efi

└─nvme1n1p3 259:3 0 111.3G 0 part

├─pve-swap 253:7 0 8G 0 lvm [SWAP]

├─pve-root 253:8 0 27.8G 0 lvm /

└─pve-iso 253:9 0 75.5G 0 lvm /mnt/iso

nvme0n1 259:4 0 476.9G 0 disk

├─VMContainer-vm--100--disk--0 253:0 0 20G 0 lvm

├─VMContainer-vm--101--disk--0 253:1 0 40G 0 lvm

├─VMContainer-vm--300--disk--0 253:2 0 20G 0 lvm

├─VMContainer-vm--102--disk--0 253:3 0 32G 0 lvm

├─VMContainer-vm--350--disk--0 253:4 0 8G 0 lvm

├─VMContainer-vm--103--disk--0 253:5 0 32G 0 lvm

├─VMContainer-vm--103--disk--1 253:6 0 32G 0 lvm

└─VMContainer-vm--102--disk--1 253:10 0 40G 0 lvm

In order to store more virtual machines, I decided I need more space, so I am planning on getting a 1TB NVME drive and an NVME adapter for migration purposes. My storage name is VMContainers for both VM and LXC. I should mention that I am well-versed in using Linux (CompTIA A+, Network+, Security+, CySA+ (passed this Tuesday), and Cisco CCNA), so I consider myself an advanced Linux user. Plus, I’m used to creating and resizing LVM partitions, so I have that covered.

If I am correct, I cannot rename the storage from VMContainer to VMContainer-tmp. so will assigning a new storage to 1TB NVME over USB with the same name be a problem? I want to create LVM storage for 1TB NVME and not LVM Thin, as I cannot create snapshots using LVM Thin and I did not know that until I learned that a few months ago.

Put it simply, my process is as follows:

- Insert a 1TB NVME drive into a USB-C NVME enclosure.

- Connect the 1TB NVME drive to the server using a USB-C cable.

- Provision a 1TB NVME drive as an LVM storage in Proxmox.

- Turn off all VMs and containers.

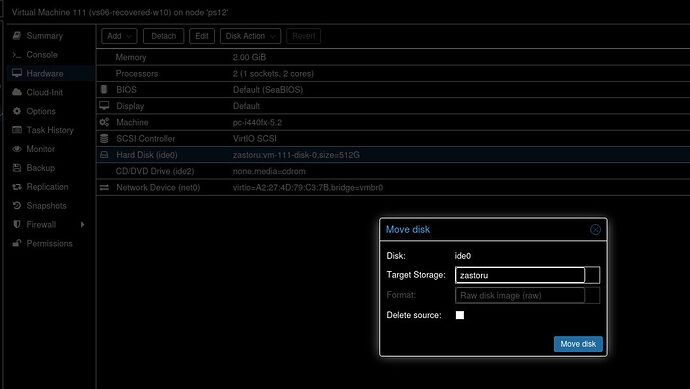

- Migrate the VMs and containers over to a 1TB NVME drive that is in an enclosure.

- Shut down the server and unplug from the PSU.

- Remove the 500GB NVME drive from the motherboard’s M.2 slot.

- Take off the 1TB drive from the enclosure and install the new drive in the motherboard’s M.2 slot.

- Plug in the PSU and turn the server back on.

- From a live USB image such as Ubuntu or Pop!_OS, mount the root drive and modify the

/etc/fstabfile so that Linux can read the new LVM partition. - Make sure that the VMs and containers are in place. If all goes well, the drive replacement is a success.

Now I have never tried migrating an LVM partition from one drive to another, but in my case, my 500GB NVME drive has an LVM Thin storage and not LVM. Am I making this so complicated when it comes to storage replacement? Can this really be done?